Welcome to “Building in the Fog”

A blog about navigating AI uncertainty to build a future we actually want

It’s hard to get a clear picture of what’s going on in AI

You may have heard that AI is an enormous financial bubble. Or not, depending on what you’re reading.

There’s so much discussion that some are speculating the volume of ‘AI bubble’ talk is itself unsustainable. An ‘AI bubble’ bubble, if you will.

At the heart of the bubble debate, people are really just trying to work out: “Is AI going to be a Very Big Deal very soon?”1

The answer is an unsatisfying maybe. Derek Thompson and Timothy B. Lee have a great post summarising the 12 most common arguments for and against an AI bubble. At the end they give their own opinions: Derek thinks we’re in a bubble, Tim thinks possibly not.

Beyond the immediate financial realities of the AI buildout, there are deeper disagreements about where this is all headed. AI 2027 and AI as Normal Technology were two essays published in April 2025 which both had a large influence on AI policy debate.

AI 2027 is a scenario forecasting the development of Artificial General Intelligence (AGI)2 in 20273. This AGI massively accelerates AI research, producing ever-better AI models and ultimately risks human extinction by 2030.

AI as Normal Technology argues that the transformative economic and societal impacts of AI are more likely to materialise over decades, rather than years. Current AI capabilities are overstated, and improvements will require the clunky and slow process of testing in the real world.

It’s worth flagging that both groups have more common ground than you might think. Both ultimately expect AI to be at least as big a deal for society as the internet, just on different timescales and with different upper bounds.

I cannot stress enough: no one knows for certain which of these scenarios are closer to the truth. Amongst other things, it is not clear how far the current paradigm of scaling large language models can go, how much AI will be able to improve AI, and the extent to which AI models will remain tools for humans to use vs autonomous agents.4

AI 2027 and AI as Normal Technology are both plausible views held by people who understand AI deeply. And yet they have wildly different implications for what we should be doing now.

Even at the very top, it’s unlikely that anyone has the full strategic picture. Sam Altman doesn’t know everything going on inside Anthropic. He probably knows even less about Safe Superintelligence Inc.5 The US government probably knows some of what’s going on inside US companies, but has far less insight into Chinese AI development.

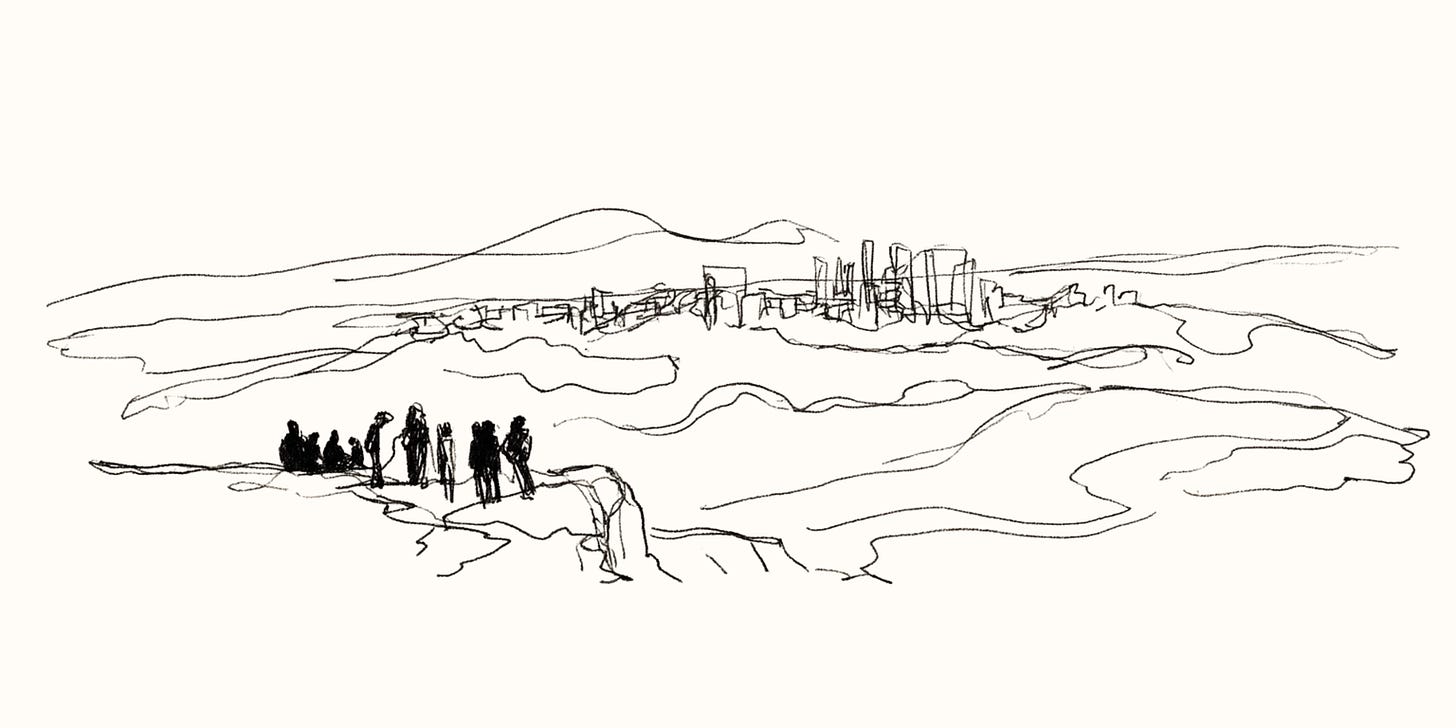

The development of the most important technology of our time is shrouded in fog. Technological uncertainty, corporate secrecy, and a host of unknown unknowns mean the path forward is unclear even to those deep in the field.

The uncertainty can feel paralysing

Smart, thoughtful people who I talk to about AI often say some version of “yeah it seems like a big deal, but I don’t know where to start”. AI will impact everything, from education to national security, employment to democracy. So which part of everything should you work on?

Even if you have a particular area of interest, it’s hard to know what to do about it. Let’s say you’re concerned about AI models assisting people to build bioweapons.6

First of all, it’s not easy to work out how good AI models actually are at this. A review from January 2025 concluded that there was no evidence that LLMs could meaningfully assist with building bioweapons.

Since then, OpenAI and Anthropic have both released models that they determined could assist a novice to build and deploy a bioweapon. To test this they gave their models questions on biology knowledge, reasoning, applied skills, and creativity. These questions are just proxies for ‘can it actually help someone build a virus?’, but trials are happening right now in real labs to test this capability directly.7

If you’re convinced that we should prepare for a world where dangerous biological information is easy to access, it’s still hard to know what to do about it. Trying to make sure AI models don’t give out bioweapon recipes might seem like the logical place to start (and it’s an important part of the puzzle!), but you’re quickly confronted with questions such as:

What counts as “dangerous biological information”?

How do you decide which legitimate actors can have access to more powerful bio-AI models?

Even if you put safeguards on your models, what’s to stop someone else releasing one without them, or removing guardrails from an open-source model?

It might also be that the more important places to intervene are downstream of the AI models, such as securing DNA synthesis or building air sanitation systems to make us less susceptible to airborne diseases.

Finally, the thing you do to help might be actively harmful. Running an experiment to see which AI models are the most vulnerable to jailbreaking on biosecurity topics would be useful information for governments and model developers. However it would also be very useful information for a bad actor looking to cause harm.

If you care about making AI go well for the world, it’s easy to see this fog all around and feel a sense of helplessness. It feels reckless to plough ahead when we can barely see past our noses. And yet doing nothing is also a choice.

The way to make your voice heard is to build

The fog makes it easy to speculate and hard to take action. “Is AI a bubble?”, “Will AI take my job?”, “Will AI take over the world?” are all questions I hear regularly.

Notice the lack of agency in all of these questions. For most people, AI feels like something that is happening to them, rather than something they can affect.

To some extent they’re right. Sam Altman, Dario Amodei and Demis Hassabis certainly aren’t consulting the public before ploughing ahead with each model release. But those three have influence because they are building things. Not everyone can start an AI lab, but building something real matters far more than just having an opinion.

Matt Clifford has an analogy to scales, where the future is determined by the cumulative weight of people’s ambition, not just the sheer number of people who hold an opinion.

“Through [building] technology, individuals get to vote for the kind of future they would like. But the world is a weighing, not a counting, machine: small groups of people can have disproportionate impact through sheer force of will and ambition.”

This extends beyond just building technologies. The future we get will also be shaped by the new political movements, institutions, international treaties, research fields and ideologies that people choose to build in the next decade or so.

We need more than just software and machine learning engineers working on these problems. Lawyers are best-placed to build the legal frameworks governing AI agents. Economists are best-placed to build the models that predict and shape how AI affects the labour market. Community leaders will be the ones building local institutions that help people adapt to AI-driven change.

Getting AI right will require all of these builders and more.

You can’t wait for the fog to clear

The foundations laid in the fog will determine the future we see when it clears. If you wait, you will be left staring at what others have built.

There are many decisions over the coming years that will shape the future we get. Who controls powerful AI systems? How should AI agents interact with our world? Who is liable if things go wrong? At what point should we choose not to train more powerful models? These decisions will be made under immense uncertainty, by people most comfortable operating in it.

Some things may become clearer as AI diffuses through the economy. Whether we start to see models significantly accelerate AI research or act autonomously for long periods will begin to resolve the disagreements between AI 2027 and AI as Normal Technology. But if we do see signs of runaway AI self-improvement, there won’t be much time to react. We need to prepare for that scenario ahead of time.

Other things may become foggier. The gap between those who are using frontier AI systems regularly and those who aren’t will continue to widen, muddying the public discourse on AI capabilities. Persuasive AI-powered disinformation could make it harder to discern what’s real and what isn’t. If international AI competition intensifies, governments and AI companies may become more secretive, giving us less transparency on AI development.

I’ve seen too many thoughtful people paralysed by the fog, waiting for certainty before they’ll take a step. But certainty isn’t coming; the fog is the climate, not just a passing storm. For a technology whose epicentre is San Francisco, maybe we shouldn’t be surprised.

Your torch and hammer

The aim of this blog, and of BlueDot, is to empower the world’s most ambitious, kind, thoughtful people to build in the fog.

We want to give everyone a torch and a hammer.

The torch is to reduce uncertainty. To help you peer as far through the fog as it is currently possible to do. To give you context on the current state of play in AI, and to see the contours well enough to determine what you should build and why you should build it.

The hammer is to boost your agency. To empower you to start building even though the path forward is unclear. There will come a time where you have done all the reading, thinking and learning you can do, and the only way to gain more information is to start building your own path through the fog.

At BlueDot, we’ve helped thousands of people understand where AI is going and what they can do about it. Hundreds now work directly on these problems: in lab safety teams, think tanks, governments, and new organisations they founded themselves. We run courses, events around the world, hackathons and incubators to get people building.

Deciding to write this blog is also to peer through the fog for myself. To work out what I actually think by forcing myself to write it down. To put those thoughts on paper and interrogate them. To get feedback from others who have thought more deeply than I have. To look again and again at the path ahead until I can see enough to take the next step.

The window for human agency over this technology may not stay open forever. But for now it’s humans who decide, and the things we choose to build that matter. Let’s do everything we can in this window to make sure the future is one we actually want.

If you’re ready to pick up your own torch, our free Future of AI (2 hours, self-paced) and AGI Strategy (25 hours, group discussions) courses are the place to start.

More specifically, “Will AI capabilities improve enough in the next few years to generate $100Bs of revenue before companies have to pay off the $100Bs of debt they’ve racked up?”

Roughly, an AI system that is equal or better than humans at all cognitive tasks.

They have since updated this estimate to around 2030. Phew, plenty of time!

Helen Toner has a great post laying out the the main uncertainties on these three topics.

They have raised $3B and are valued at $32B. Yes that is their entire website.

Which many people are, including the US government, UK AI Security Institute, OpenAI and Anthropic.

Really like the torch and hammer analogy. And as a recent graduate of both courses, I highly recommend the Future of AI and AGI Strategy courses for those doing some wayfinding!

Love the focus on agency and building things despite uncertainty. Please keep going! Your torch is burning especially bright!